This article was automatically translated from the original Turkish version.

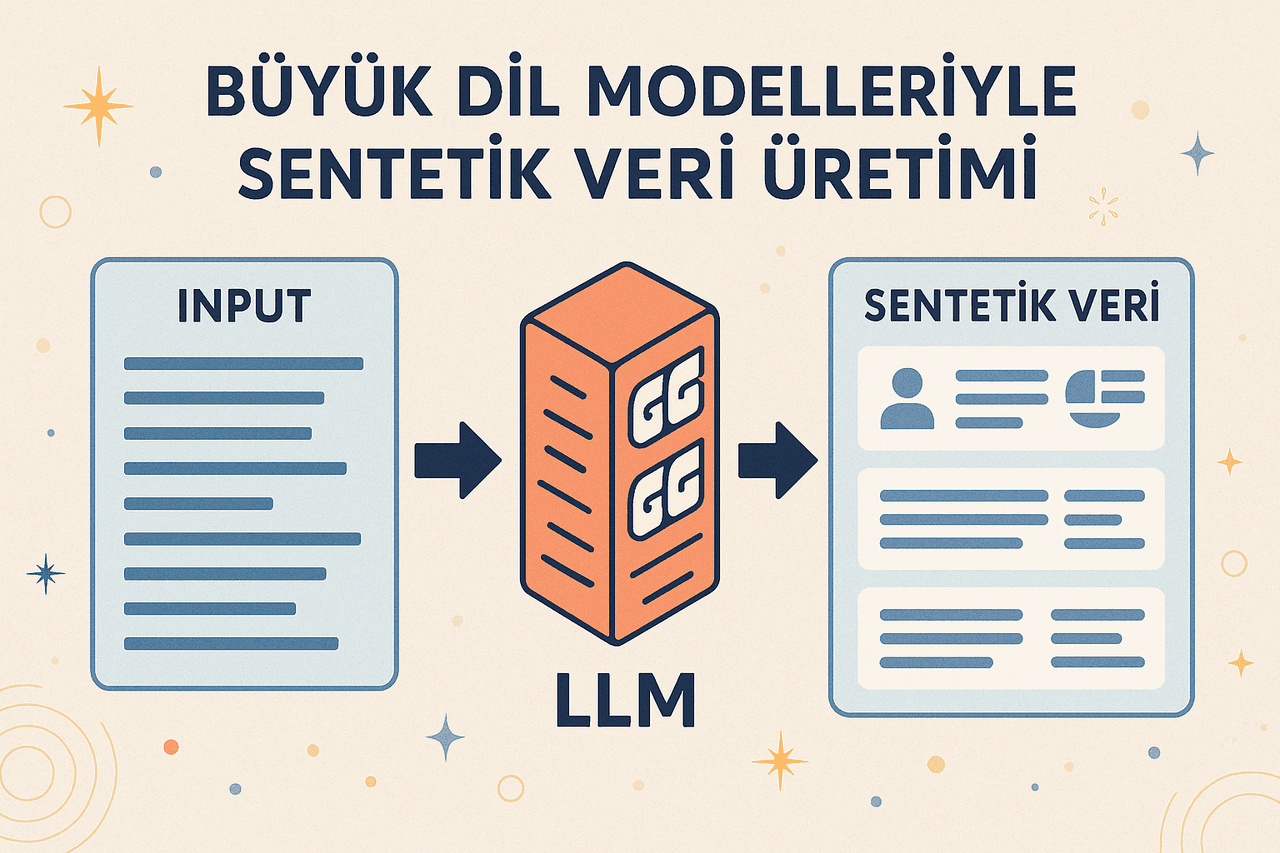

Synthetic Data Generation with Large Language Models is the process of creating synthetic data that resembles real data in quality, leveraging the ability of large language models (LLMs) to artificially generate various types of content such as text, code, or labeled data. Large language models introduce a new dimension to this process through their capacity to artificially produce diverse content like text, code, or labeled data. This approach enables the creation of high-quality datasets that can either substitute for or complement real data, particularly in scenarios where labeled data is scarce, privacy requirements are high, or data collection is costly.

Image generated by artificial intelligence.

In recent years, large language models such as OpenAI’s GPT-4, Meta’s Llama 3, and Anthropic’s Claude have begun generating large-scale synthetic datasets not only for natural language processing tasks but also in fields like software engineering, code generation, and bug correction. These models, through their transformer-based architectures, large-scale pre-training processes, and specialized fine-tuning methods, can produce labeled and meaningful data samples that closely mimic real data for a variety of tasks.

The methods used in synthetic data generation vary according to how the model is guided, the quality of the outputs, and the validation process:

In prompt-based generation, the model is directed using a pre-prepared prompt for a specific task. The multi-layered structure of the transformer architecture processes this prompt through attention mechanisms and generates output by leveraging associated context. In the zero-shot approach, only a natural language description is provided; in one-shot and few-shot approaches, the model learns the task through “example matching.” Each example is weighted according to its position within the model’s context window, and the output is derived to resemble the distribution of the examples.

In topic-controlled generation, general topics or themes are first defined, and then detailed examples are synthesized under each topic. In this approach, the model first passes through a topic selection layer within a multi-step pipeline, after which a contextual sampling component generates different variants for each theme. This ensures content diversity while aligning each generation within a “topic embedding” space.

In this method, outputs produced by the model are subjected to automated or human-in-the-loop quality control processes; erroneous or weak examples are fed back to the model as feedback for reprocessing. Technically, generated samples are labeled, the model’s loss function is reweighted, and this information is provided for the next generation cycle. This enables the model to learn from its generation errors and improve its consistency and accuracy.

In the RAG architecture, the model first selects relevant fragments from real data sources such as document collections or databases via a retrieval module; these fragments are then passed to the transformer-based generation component as additional context. This allows the model to generate content based not only on learned weights but also on dynamically selected external information. Structurally, it can be viewed as a two-stage encoder-decoder system: the encoder handles the “information retrieval” task, while the decoder performs the “regeneration” task.

Generated code samples are sent to automated testing frameworks such as unit test suites; correctly functioning code is included in the dataset while faulty code is discarded or reprocessed using additional prompts for correction. As a result, only functional, compilable, and test-passing code snippets are added to the model’s training data; this process systematically improves the performance of code generation.

Synthetic data generated by large language models can be applied across numerous domains, primarily natural language processing and software engineering. In tasks such as text classification, sentiment analysis, or topic detection, it provides balanced and limited data; in question-answering systems, dialogue bots, and text summarization applications, it creates expanded training sets. In software development, it provides functional code examples to enhance capabilities such as code completion, cross-language translation, and bug correction.

Synthetic data serves as an alternative when access to real data is limited, reducing the cost and time associated with human labeling while improving model generalization through wide variation possibilities. Additionally, in privacy-sensitive scenarios, it provides datasets that reflect linguistic patterns without containing personal information, facilitating compliance with data protection regulations.

The hallucination tendency of LLMs in synthetic data generation can lead to accuracy issues, particularly in information-based tasks. Generated examples may deviate from real-world distributions, degrading model performance; furthermore, biases present in the original training data can be transferred to synthetic data. Over the long term, models trained exclusively on synthetic data may face the risk of “model collapse.”

Barr, A. A., et al. "Large Language Models Generating Synthetic Clinical Datasets." Frontiers in Artificial Intelligence. 2025. https://www.frontiersin.org/journals/artificial-intelligence/articles/10.3389/frai.2025.1533508/full

Kim, S., et al. "Evaluating Language Models as Synthetic Data Generators." 2024. *arXiv Preprint* arXiv:2412.03679. https://arxiv.org/abs/2412.03679

Lewis, P., Perez, E., Piktus, A., Petroni, F., and Kiela, D. "Retrieval-Augmented Generation for Knowledge-Intensive NLP Tasks." 2020. *arXiv Preprint* arXiv:2005.11401. https://arxiv.org/abs/2005.11401

Nadas, Mihai, Diosan, Laura, and Tomescu, Andreea. "Synthetic Data Generation Using Large Language Models: Advances in Text and Code." 2025. *arXiv.* https://arxiv.org/pdf/2503.14023

Shumailov, Ilya, et al. "The Curse of Recursion: Training on Generated Data Makes Models Forget." 2023. *arXiv Preprint* arXiv:2305.17493. https://arxiv.org/abs/2305.17493

No Discussion Added Yet

Start discussion for "Synthetic Data Generation with Large Language Models" article

Scope of LLM-Based Synthetic Data Generation

Methods

Prompt-Based Generation

Topic-Controlled Generation

Feedback-Loop Generation

Retrieval-Augmented Generation (RAG)

Execution Feedback – For Code Generation

Application Areas

Advantages

Challenges and Risks